Three Landscapes, One Security Shift: What OWASP's Q2 2026 Update Is Really Saying

OWASP published three separate AI security landscapes in Q2 2026: one for LLM and GenAI applications, one for agentic AI, and a first-ever Red Teaming Landscape. Each maps a categorically different attack surface. This breakdown explains what changed, why agentic security requires different controls, and what continuous red teaming means for teams shipping agents.

This quarter, the OWASP GenAI Security Project published three AI security landscapes: one for agentic AI, one for LLM and GenAI applications, and for the first time, a dedicated Red Teaming Landscape. As an active contributor to the OWASP GenAI Security Project community, I want to explain why the decision to publish three separate documents matters more than anything inside any one of them.

Straiker appears in all three. I'll come back to why that is significant. But first, the landscapes themselves.

Agentic AI security is its own category. OWASP has now made that official.

The Q2 2026 Agentic AI Security Solutions Landscape covers what happens when models gain autonomy. Agentic systems call tools, coordinate with other agents, persist memory across sessions, and execute multi-step tasks against real systems. The security boundary is no longer the model. It is every decision the model makes and every system it can reach.

As a useful frame: when you interact with an AI as a conversational interface, the human is still the control plane. The model generates a response. You decide what to do with it. Every action passes through a person.

When that same model is deployed as an agentic workflow, that changes. It is reasoning and acting across multiple systems with no human reviewing each step. It is calling APIs, reading and writing files, handing context to downstream agents, and making runtime decisions continuously.

That transition from reactive to autonomous is where existing security controls stop being sufficient.

Input and output guardrails were designed for the first model. They were not designed to catch prompt injection through a multi-turn reasoning chain, privilege escalation through tool invocation, or behavioral drift caused by a knowledge base update that never touched your codebase. Those risks emerge at execution time. They require detection at execution time.

The agentic landscape catalogs solutions across four areas largely absent from the LLM landscape:

- Multi-agent orchestration security. When agents share context and delegate authority across a pipeline using protocols like MCP or A2A, a compromised instruction at one node can propagate across the system. Tooling here has to trace reasoning lineage across agents, not validate individual prompts in isolation.

- Runtime tool and plugin security. A vetted external tool can change its behavior without changing its API contract. When that behavior feeds into agentic reasoning, the agent acts on it with no code change and no deployment event. Static scans do not surface this class of risk.

- Memory and context scope enforcement. Agentic systems persist context across sessions in ways that create cross-session leakage risks. Scope enforcement at the memory layer does not exist in traditional LLM security frameworks.

- Behavioral drift detection. Agents can change behavior through prompt updates, tool configuration changes, or knowledge base updates, none of which trigger a standard deployment pipeline. Tracking behavioral delta across builds, separate from code diff, is a requirement unique to agentic systems.

This is the gap the agentic landscape is mapping. Static analysis and model-level evaluations test what a system contains. Agentic security has to test how a system behaves, using detection that combines context and behavioral analysis to catch risks that only emerge at execution time.

The LLM and GenAI landscape: a solid reference for where most teams currently are

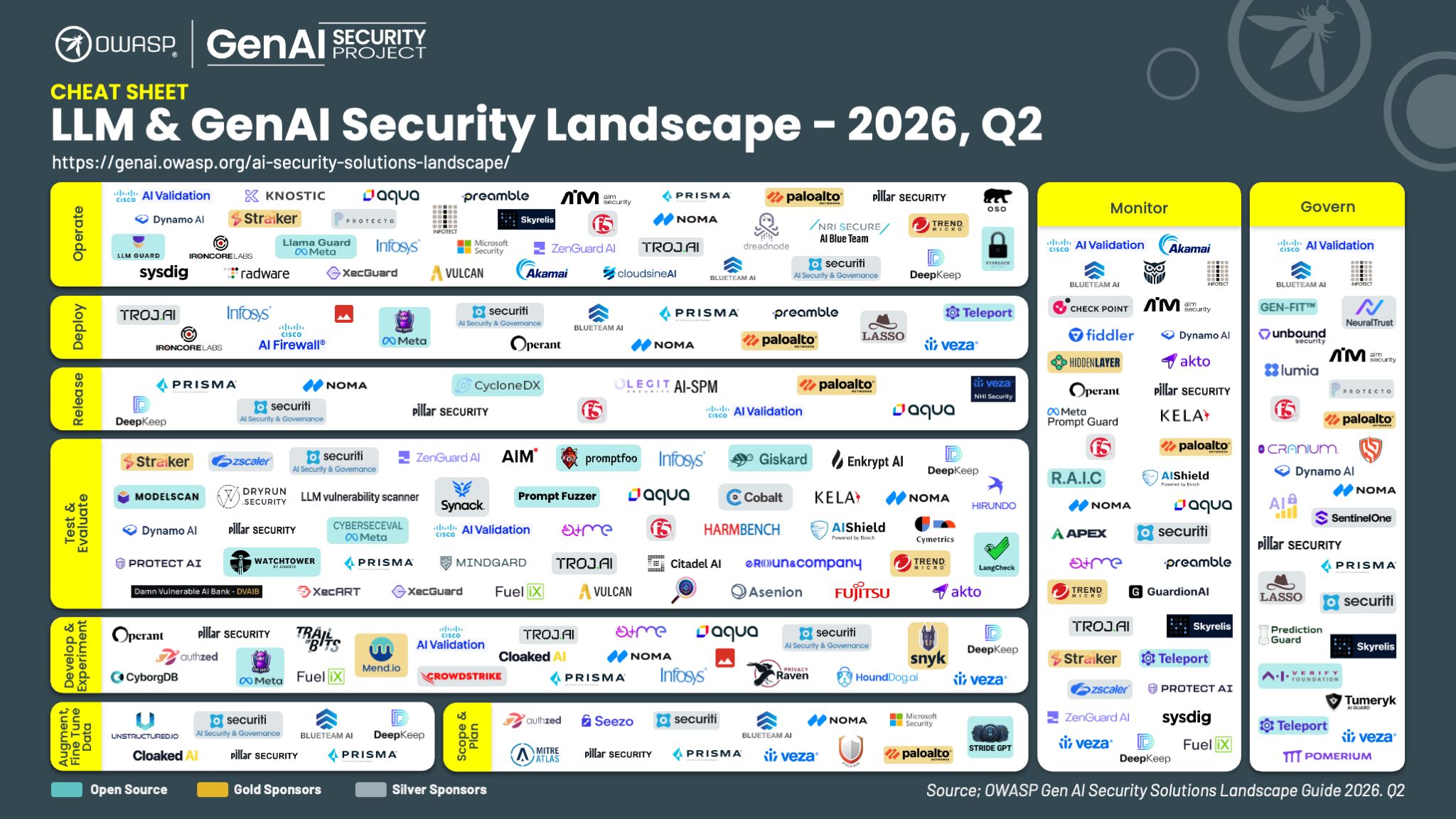

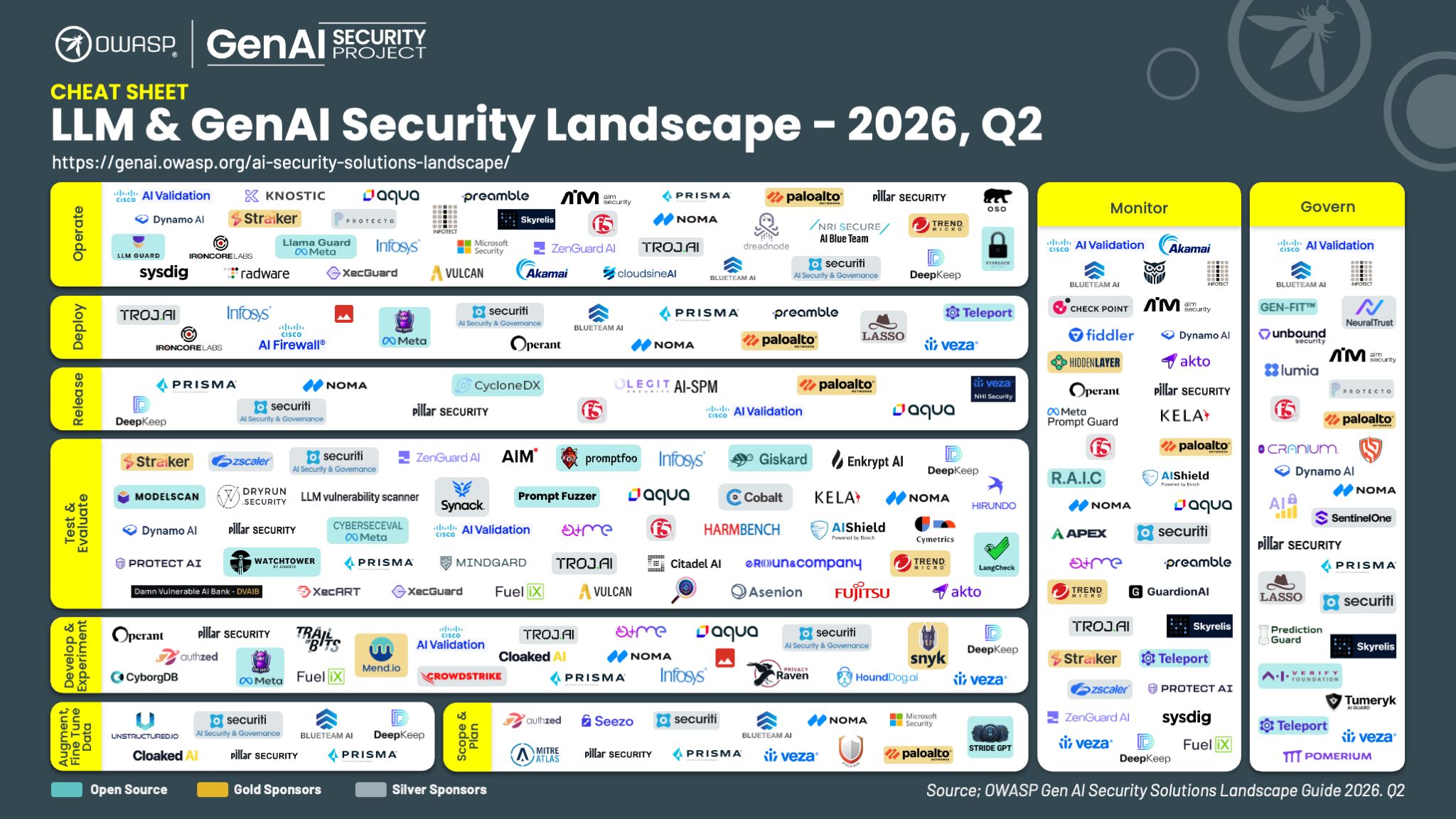

The Q2 2026 LLM and GenAI Applications landscape covers what most teams think of when they hear "AI security": input and output guardrails, model evaluation, data poisoning, access controls around inference endpoints. It catalogs open-source and commercial solutions across the full GenAI development lifecycle, organized by stage coverage and threat category.

If your team is primarily building LLM-powered applications rather than agentic workflows, this is a useful reference for auditing your current tooling coverage.

The more important signal is what the agentic landscape covers that this one does not. Publishing them as two distinct documents is OWASP making a formal statement: these are categorically different problems with different attack surfaces, different failure modes, and different controls required.

The Red Teaming Landscape: the most important new document in this set

OWASP published its first-ever Red Teaming Landscape last week on Friday April 10th, and it deserves careful attention.

It maps tooling across the full development lifecycle, from fine-tuning and experimentation through testing, release, deployment, and production operations, with monitoring and governance cutting across all of it. The structure is intentional. Most teams treat red teaming as something that happens before a release. Run the evals, check the box, ship it. The landscape pushes back on that directly.

The system you ship on day one is not the system running sixty days later. The gaps that hurt you tend to open up after the release gate, not before it.

Red teaming a model is a tractable problem. You have an artifact with defined inputs and outputs, you stress test it, you decide whether it meets your bar. Red teaming an agentic system is harder because the test surface is not fixed. By the time you finish a testing cycle, a tool the agent relies on has updated its behavior, a new MCP server has been added to the environment, a downstream agent in the same pipeline has been modified by a different team. None of that shows up in your code diff.

Adversarial simulation against an agentic footprint has to run continuously and has to generate new test cases from what it finds, because the thing you are testing keeps changing. A static eval suite run on a quarterly cadence is not red teaming an agent. It is red teaming a version of the agent that no longer exists.

What it means that Straiker appears in all three

Most vendors in the AI security space occupy one of these landscapes. Some occupy two. Appearing in all three reflects something specific about how Straiker is built: the architecture was designed around the full lifecycle of an agentic system, not a single control point.

It starts with visibility. Before you can test or defend an agentic system, you need to know what agents exist, what tools they are calling, and what MCP servers are in scope. Straiker Discover AI provides that inventory layer. For most teams, this is the first time they have a complete picture of their agentic footprint.

From there, you attack it. Straiker Ascend AI runs autonomous adversarial simulations against that footprint. Adversarial agents probe reasoning paths, test tool boundaries, and surface contract violations across multi-turn sessions and at every stage from build through runtime. Each detected exploit becomes a new test case. The reason it has to work this way is what the Red Teaming Landscape makes visible: the thing you are testing keeps changing, so your testing has to as well.

Then you enforce. Straiker Defend AI applies guardrails at the agent layer, not the API layer. Controls that do not understand agent context, tool invocation state, or reasoning scope are not defending agentic systems. They are defending a surface the architecture has already moved past.

The sequence matters: discovery before testing, testing before deployment, enforcement at runtime.

If your team is shipping AI agents

The fact that OWASP published three separate landscapes this quarter is the community formally drawing the line between these categories. The security tooling and practices that address LLM applications do not fully address agentic systems. The testing methodology that works for a model does not work for an agent.

If you are building agentic systems, multi-step workflows, tool-calling pipelines, or multi-agent architectures, the Agentic AI landscape and the Red Teaming Landscape are the documents your next architecture review needs. We should talk.

This quarter, the OWASP GenAI Security Project published three AI security landscapes: one for agentic AI, one for LLM and GenAI applications, and for the first time, a dedicated Red Teaming Landscape. As an active contributor to the OWASP GenAI Security Project community, I want to explain why the decision to publish three separate documents matters more than anything inside any one of them.

Straiker appears in all three. I'll come back to why that is significant. But first, the landscapes themselves.

Agentic AI security is its own category. OWASP has now made that official.

The Q2 2026 Agentic AI Security Solutions Landscape covers what happens when models gain autonomy. Agentic systems call tools, coordinate with other agents, persist memory across sessions, and execute multi-step tasks against real systems. The security boundary is no longer the model. It is every decision the model makes and every system it can reach.

As a useful frame: when you interact with an AI as a conversational interface, the human is still the control plane. The model generates a response. You decide what to do with it. Every action passes through a person.

When that same model is deployed as an agentic workflow, that changes. It is reasoning and acting across multiple systems with no human reviewing each step. It is calling APIs, reading and writing files, handing context to downstream agents, and making runtime decisions continuously.

That transition from reactive to autonomous is where existing security controls stop being sufficient.

Input and output guardrails were designed for the first model. They were not designed to catch prompt injection through a multi-turn reasoning chain, privilege escalation through tool invocation, or behavioral drift caused by a knowledge base update that never touched your codebase. Those risks emerge at execution time. They require detection at execution time.

The agentic landscape catalogs solutions across four areas largely absent from the LLM landscape:

- Multi-agent orchestration security. When agents share context and delegate authority across a pipeline using protocols like MCP or A2A, a compromised instruction at one node can propagate across the system. Tooling here has to trace reasoning lineage across agents, not validate individual prompts in isolation.

- Runtime tool and plugin security. A vetted external tool can change its behavior without changing its API contract. When that behavior feeds into agentic reasoning, the agent acts on it with no code change and no deployment event. Static scans do not surface this class of risk.

- Memory and context scope enforcement. Agentic systems persist context across sessions in ways that create cross-session leakage risks. Scope enforcement at the memory layer does not exist in traditional LLM security frameworks.

- Behavioral drift detection. Agents can change behavior through prompt updates, tool configuration changes, or knowledge base updates, none of which trigger a standard deployment pipeline. Tracking behavioral delta across builds, separate from code diff, is a requirement unique to agentic systems.

This is the gap the agentic landscape is mapping. Static analysis and model-level evaluations test what a system contains. Agentic security has to test how a system behaves, using detection that combines context and behavioral analysis to catch risks that only emerge at execution time.

The LLM and GenAI landscape: a solid reference for where most teams currently are

The Q2 2026 LLM and GenAI Applications landscape covers what most teams think of when they hear "AI security": input and output guardrails, model evaluation, data poisoning, access controls around inference endpoints. It catalogs open-source and commercial solutions across the full GenAI development lifecycle, organized by stage coverage and threat category.

If your team is primarily building LLM-powered applications rather than agentic workflows, this is a useful reference for auditing your current tooling coverage.

The more important signal is what the agentic landscape covers that this one does not. Publishing them as two distinct documents is OWASP making a formal statement: these are categorically different problems with different attack surfaces, different failure modes, and different controls required.

The Red Teaming Landscape: the most important new document in this set

OWASP published its first-ever Red Teaming Landscape last week on Friday April 10th, and it deserves careful attention.

It maps tooling across the full development lifecycle, from fine-tuning and experimentation through testing, release, deployment, and production operations, with monitoring and governance cutting across all of it. The structure is intentional. Most teams treat red teaming as something that happens before a release. Run the evals, check the box, ship it. The landscape pushes back on that directly.

The system you ship on day one is not the system running sixty days later. The gaps that hurt you tend to open up after the release gate, not before it.

Red teaming a model is a tractable problem. You have an artifact with defined inputs and outputs, you stress test it, you decide whether it meets your bar. Red teaming an agentic system is harder because the test surface is not fixed. By the time you finish a testing cycle, a tool the agent relies on has updated its behavior, a new MCP server has been added to the environment, a downstream agent in the same pipeline has been modified by a different team. None of that shows up in your code diff.

Adversarial simulation against an agentic footprint has to run continuously and has to generate new test cases from what it finds, because the thing you are testing keeps changing. A static eval suite run on a quarterly cadence is not red teaming an agent. It is red teaming a version of the agent that no longer exists.

What it means that Straiker appears in all three

Most vendors in the AI security space occupy one of these landscapes. Some occupy two. Appearing in all three reflects something specific about how Straiker is built: the architecture was designed around the full lifecycle of an agentic system, not a single control point.

It starts with visibility. Before you can test or defend an agentic system, you need to know what agents exist, what tools they are calling, and what MCP servers are in scope. Straiker Discover AI provides that inventory layer. For most teams, this is the first time they have a complete picture of their agentic footprint.

From there, you attack it. Straiker Ascend AI runs autonomous adversarial simulations against that footprint. Adversarial agents probe reasoning paths, test tool boundaries, and surface contract violations across multi-turn sessions and at every stage from build through runtime. Each detected exploit becomes a new test case. The reason it has to work this way is what the Red Teaming Landscape makes visible: the thing you are testing keeps changing, so your testing has to as well.

Then you enforce. Straiker Defend AI applies guardrails at the agent layer, not the API layer. Controls that do not understand agent context, tool invocation state, or reasoning scope are not defending agentic systems. They are defending a surface the architecture has already moved past.

The sequence matters: discovery before testing, testing before deployment, enforcement at runtime.

If your team is shipping AI agents

The fact that OWASP published three separate landscapes this quarter is the community formally drawing the line between these categories. The security tooling and practices that address LLM applications do not fully address agentic systems. The testing methodology that works for a model does not work for an agent.

If you are building agentic systems, multi-step workflows, tool-calling pipelines, or multi-agent architectures, the Agentic AI landscape and the Red Teaming Landscape are the documents your next architecture review needs. We should talk.